The value of being wrong, by Dr Neil Calder

So, what is the value in getting it wrong? Seemingly counterintuitive, this is an interesting one to unpack and naturally draws in a range of prejudices and inbuilt assumptions regarding what is right, or correct. In manufacturing metrology, this most obviously means what’s on the drawing face or within a process specification, but the reality is more nuanced.

I’m going to go a bit Zen here and theorise that everything is imperfect, impermanent and incomplete. This is as true on the manufacturing shop floor as it is in other areas of life. Metrology and the reliance on it can create a seductive illusion of black and white, of go and no-go, and of the binary acceptability of a tolerance band on quantifiable features. In reality, it’s all actually more like shades of grey.

My usual day job as a Monitoring Officer for government grant funded industrial innovation projects has me invariably raising the question of “how much is enough” whenever I’m presented with a measurement or quantification of technical results. This is an easy and deceptively trivial question to ask, but usually much harder to answer. And that’s assuming that there even actually is a comprehensible or meaningful answer to that simple question.

This has led me towards appreciating the value which lies within innovation counselling. It relies very heavily on embracing the rich inherent value in the learning which comes from missing the target. In industrial R&D, it’s the stance of being able to expect the unexpected and to maintain a degree of elasticity or compliance when it come to technical progress. To hold too rigidly to a notion of a plan creates a brittleness in thinking which can cloud judgement and lead to a situation of confirmation bias.

Within the context of automated manufacturing decision making, a critical facet of digital manufacturing, there’s a fundamental need to generate errors or non-compliances in order for machine learning processes to actually do their learning bit. If every shot lands on target, then the understanding of the dynamic of what contributes to this is obscured. I had a recent experience with a digital manufacturing process development project involving a large UK aeroengine manufacturer where we encountered this exact phenomenon. In a production system where errors and nonconformances are measured in a small number of parts per million (numerically, six-sigma equates to around three defects per million events), the messy stuff at the edge of what’s acceptable is actually gold dust in highlighting manufacturing process vulnerabilities and quality characteristics.

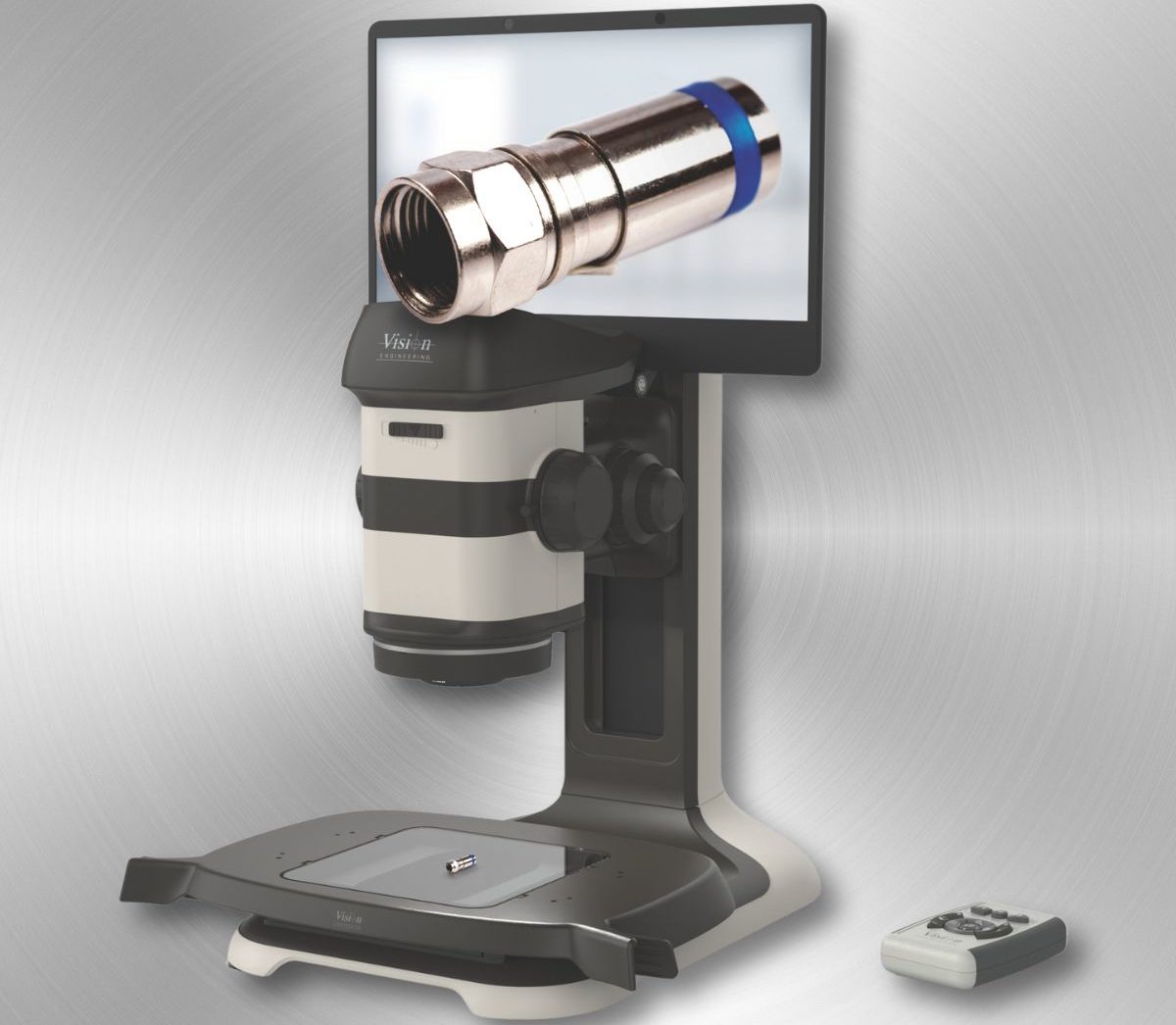

It’s never been easier to generate a very large volume of highly accurate measurement data from manufacturing processes, but how useful is this really if you are drowning in it? As an analogy of Heisenberg’s uncertainty principle, the more closely you know about the what, the further you get from the why.

Any mechanical, electrical or digital control system requires the existence of an error in order to function. It can be a voyage of discovery to deliberately introduce perturbations into the system to see how it moves, how errors accumulate and multiply and therefore how they can be avoided or how the situation improved. Similarly, production quality systems require a healthy feed of “bad” in order to properly understand what “good” is.

So, it’s not necessarily about getting it right, but about what you do with the information which comes from measurement. There’s a saying that good judgement comes from experience, and experience comes from bad judgements. Or … you have to break a few eggs to make an omelette.